Detecting iOS 7 Touch Screen Gesture Motions

| Previous | Table of Contents | Next |

| An Example iOS 7 Touch, Multitouch and Tap Application | Identifying Gestures using iOS 7 Gesture Recognizers |

Learn SwiftUI and take your iOS Development to the Next Level |

The next area of iOS touch screen event handling that we will look at in this book involves the detection of gestures involving movement. As covered in a previous chapter, a gesture refers to the activity that takes place in the time between a finger touching the screen and the finger then being lifted from the screen. In the chapter entitled An Example iOS 7 Touch, Multitouch and Tap Application we dealt with touches that did not involve any movement across the screen surface. We will now create an example that tracks the coordinates of a finger as it moves across the screen.

Note that the assumption is made throughout this chapter that the reader has already reviewed the An Overview of iOS 7 Multitouch, Taps and Gestures chapter of this book.

The Example iOS 7 Gesture Application

This example application will detect when a single touch is made on the screen of the iPhone or iPad and then report the coordinates of that finger as it is moved across the screen surface.

Creating the Example Project

Start the Xcode environment and select the option to create a new project using the Single View Application template option for either the iPhone or iPad device family and name the project and class prefix TouchMotion.

Designing the Application User Interface

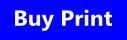

The application will display the X and Y coordinates of the touch and update these values in real-time as the finger moves across the screen. When the finger is lifted from the screen, the start and end coordinates of the gesture will then be displayed on two label objects in the user interface. Select the Main.storyboard file and, using Interface Builder, create a user interface such that it resembles the layout in Figure 45-1:

Figure 45-1

Be sure to stretch the labels so that they both extend to cover most of the width of the view.

Learn SwiftUI and take your iOS Development to the Next Level |

Next, select the TouchMotionViewController.h file, verify that the outlets are correct, then declare a property in which to store coordinates of the start location on the screen:

#import <UIKit/UIKit.h> @interface TouchMotionViewController : UIViewController @property (strong, nonatomic) IBOutlet UILabel *xCoord; @property (strong, nonatomic) IBOutlet UILabel *yCoord; @property CGPoint startPoint; @end

Implementing the touchesBegan Method

When the user first touches the screen the location coordinates need to be saved in the startPoint instance variable and those coordinates reported to the user. This can be achieved by implementing the touchesBegan method in the TouchMotionViewController.m file as follows:

- (void) touchesBegan:(NSSet *)touches

withEvent:(UIEvent *)event {

UITouch *theTouch = [touches anyObject];

_startPoint = [theTouch locationInView:self.view];

CGFloat x = _startPoint.x;

CGFloat y = _startPoint.y;

_xCoord.text = [NSString stringWithFormat:@"x = %f", x];

_yCoord.text = [NSString stringWithFormat:@"y = %f", y];

}

Implementing the touchesMoved Method

When the user’s finger moves across the screen the touchesMoved event will be called repeatedly until the motion stops. By implementing the touchesMoved method in our view controller, therefore, we can detect the motion and display the revised coordinates to the user:

- (void) touchesMoved:(NSSet *)touches

withEvent:(UIEvent *)event {

UITouch *theTouch = [touches anyObject];

CGPoint touchLocation =

[theTouch locationInView:self.view];

CGFloat x = touchLocation.x;

CGFloat y = touchLocation.y;

_xCoord.text = [NSString stringWithFormat:@"x = %f", x];

_yCoord.text = [NSString stringWithFormat:@"y = %f", y];

}

Implementing the touchesEnded Method

When the user’s finger lifts from the screen the touchesEnded method of the first responder is called. The final task, therefore, is to implement this method in our view controller such that it displays the start and end points of the gesture:

- (void) touchesEnded:(NSSet *)touches

withEvent:(UIEvent *)event {

UITouch *theTouch = [touches anyObject];

CGPoint endPoint = [theTouch locationInView:self.view];

_xCoord.text = [NSString stringWithFormat:

@"start = %f, %f", _startPoint.x, _startPoint.y];

_yCoord.text = [NSString stringWithFormat:

@"end = %f, %f", endPoint.x, endPoint.y];

}

Building and Running the Gesture Example

Build and run the application using the Run button located in the toolbar of the main Xcode project window. When the application starts (either in the iOS Simulator or on a physical device) touch the screen and drag to a new location before lifting your finger from the screen (or mouse button in the case of the iOS Simulator). During the motion the current coordinates will update in real time. Once the gesture is complete the start and end locations of the movement will be displayed.

Summary

Simply by implementing the standard touch event methods the motion of a gesture can easily be tracked by an iOS application. Much of a user’s interaction with applications, however, involves some very specific gesture types such as swipes and pinches. To write code to correlate finger movement on the screen with a specific gesture type would be extremely complex. Fortunately, iOS 7 makes this task easy through the use of gesture recognizers. In the next chapter, entitled Identifying Gestures using iOS 7 Gesture Recognizers, we will look at this concept in more detail.

Learn SwiftUI and take your iOS Development to the Next Level |

| Previous | Table of Contents | Next |

| An Example iOS 7 Touch, Multitouch and Tap Application | Identifying Gestures using iOS 7 Gesture Recognizers |