The iOS SDK provides several mechanisms for implementing audio playback from within an iOS app. The easiest technique from the app developer’s perspective is to use the AVAudioPlayer class, which is part of the AV Foundation Framework.

This chapter will provide an overview of audio playback using the AVAudioPlayer class. Once the basics have been covered, a tutorial is worked through step by step. The topic of recording audio from within an iOS app is covered in the next chapter entitled Recording Audio on iOS 17 with AVAudioRecorder.

Supported Audio Formats

The AV Foundation Framework supports the playback of various audio formats and codecs, including software and hardware-based decoding. Codecs and formats currently supported are as follows:

- AAC (MPEG-4 Advanced Audio Coding)

- ALAC (Apple Lossless)

- AMR (Adaptive Multi-rate)

- HE-AAC (MPEG-4 High-Efficiency AAC)

- iLBC (internet Low Bit Rate Codec)

- Linear PCM (uncompressed, linear pulse code modulation)

- MP3 (MPEG-1 audio layer 3)

- µ-law and a-law

If an audio file is to be included as part of the resource bundle for an app, it may be converted to a supported audio format before inclusion in the app project using the macOS afconvert command-line tool. For details on how to use this tool, run the following command in a Terminal window:

afconvert –hCode language: plaintext (plaintext)Receiving Playback Notifications

An app receives notifications from an AVAudioPlayer instance by declaring itself as the object’s delegate and implementing some or all of the following AVAudioPlayerDelegate protocol methods:

- audioPlayerDidFinishPlaying – Called when the audio playback finishes. An argument passed through to the method indicates whether the playback was completed successfully or failed due to an error.

- audioPlayerDecodeErrorDidOccur – Called when the AVAudioPlayer object encounters a decoding error during audio playback. An error object containing information about the nature of the problem is passed through to this method as an argument.

- audioPlayerBeginInterruption – Called when audio playback has been interrupted by a system event, such as an incoming phone call. Playback is automatically paused, and the current audio session is deactivated.

- audioPlayerEndInterruption – Called after an interruption ends. The current audio session is automatically activated, and playback may be resumed by calling the play method of the corresponding AVAudioPlayer instance.

Controlling and Monitoring Playback

Once an AVAudioPlayer instance has been created, audio playback may be controlled and monitored programmatically via the methods and properties of that instance. For example, the self-explanatory play, pause and stop methods may be used to control playback. Similarly, the volume property may be used to adjust the volume level of the audio playback. In contrast, the playing property may be accessed to identify whether or not the AVAudioPlayer object is currently playing audio.

In addition, playback may be delayed to begin later using the playAtTime instance method, which takes as an argument the number of seconds (as an NSTimeInterval value) to delay before beginning playback.

The length of the current audio playback may be obtained via the duration property while the current point in the playback is stored in the currentTime property.

Playback may also be programmed to loop back and repeatedly play a specified number of times using the numberOfLoops property.

Creating the Audio Example App

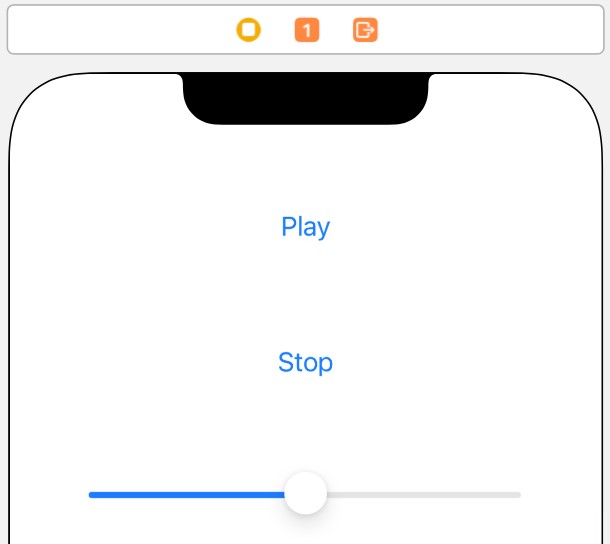

The remainder of this chapter will work through creating a simple iOS app that plays an audio file. The app’s user interface will consist of play and stop buttons to control playback and a slider to adjust the playback volume level.

Begin by launching Xcode and creating a new project using the iOS App template with the Swift and Storyboard options selected, entering AudioDemo as the product name.

Adding an Audio File to the Project Resources

To experience audio playback, adding an audio file to the project resources will be necessary. For this purpose, any supported audio format file will be suitable. Having identified a suitable audio file, drag and drop it into the Project Navigator panel of the main Xcode window. For this tutorial, we will be using an MP3 file named Moderato.mp3 which can be found in the audiofiles folder of the sample code archive, downloadable from the following URL:

https://www.ebookfrenzy.com/web/ios16/

Locate and unzip the file in a Finder window and drag and drop it onto the Project Navigator panel.

Designing the User Interface

The app user interface will comprise two buttons labeled “Play” and “Stop” and a slider to adjust the playback volume. Next, select the Main.storyboard file, display the Library, drag and drop components from the Library onto the View window and modify properties so that the interface appears as illustrated in Figure 88-1:

With the scene view selected within the storyboard canvas, display the Auto Layout Resolve Auto Layout Issues menu and select the Reset to Suggested Constraints menu option listed in the All Views in View Controller section of the menu.

Select the slider object in the view canvas, display the Assistant Editor panel, and verify that the editor is displaying the contents of the ViewController.swift file. Right-click on the slider object and drag it to a position just below the class declaration line in the Assistant Editor. Release the line, and in the resulting connection dialog, establish an outlet connection named volumeControl.

Right-click on the “Play” button object and drag the line to the area immediately beneath the viewDidLoad method in the Assistant Editor panel. Release the line and, within the resulting connection dialog, establish an Action method on the Touch Up Inside event configured to call a method named playAudio. Repeat these steps to establish an action connection on the “Stop” button to a method named stopAudio.

Right-click on the slider object and drag the line to the area immediately beneath the newly created actions in the Assistant Editor panel. Release the line and, within the resulting connection dialog, establish an Action method on the Value Changed event configured to call a method named adjustVolume.

Close the Assistant Editor panel, select the ViewController.swift file in the project navigator panel, and add an import directive and delegate declaration, together with a property to store a reference to the AVAudioPlayer instance as follows:

import UIKit

import AVFoundation

class ViewController: UIViewController, AVAudioPlayerDelegate {

@IBOutlet weak var volumeControl: UISlider!

var audioPlayer: AVAudioPlayer?

.

.Code language: Swift (swift)Implementing the Action Methods

The next step in our iOS audio player tutorial is implementing the action methods for the two buttons and the slider. Remaining in the ViewController.swift file, locate and implement these methods as outlined in the following code fragment:

@IBAction func playAudio(_ sender: Any) {

audioPlayer?.play()

}

@IBAction func stopAudio(_ sender: Any) {

audioPlayer?.stop()

}

@IBAction func adjustVolume(_ sender: Any) {

audioPlayer?.volume = volumeControl.value

}Code language: Swift (swift)Creating and Initializing the AVAudioPlayer Object

Now that we have an audio file to play and appropriate action methods written, the next step is to create an AVAudioPlayer instance and initialize it with a reference to the audio file. Since we only need to initialize the object once when the app launches, a good place to write this code is in the viewDidLoad method of the ViewController.swift file:

override func viewDidLoad() {

super.viewDidLoad()

if let bundlePath = Bundle.main.path(forResource: "Moderato",

ofType: "mp3") {

let url = URL.init(fileURLWithPath: bundlePath)

do {

try audioPlayer = AVAudioPlayer(contentsOf: url)

audioPlayer?.delegate = self

audioPlayer?.prepareToPlay()

} catch let error as NSError {

print("audioPlayer error \(error.localizedDescription)")

}

}

}Code language: Swift (swift)In the above code, we create a URL reference using the filename and type of the audio file added to the project resources. Remember that this will need to be modified to reflect the audio file used in your projects.

Next, an AVAudioPlayer instance is created using the URL of the audio file. Assuming no errors were detected, the current class is designated as the delegate for the audio player object. Finally, a call is made to the audioPlayer object’s prepareToPlay method. This performs initial buffering tasks, so there is no buffering delay when the user selects the play button.

Implementing the AVAudioPlayerDelegate Protocol Methods

As previously discussed, by declaring our view controller as the delegate for our AVAudioPlayer instance, our app will be able to receive notifications relating to the playback. Templates of these methods are as follows and may be placed in the ViewController.swift file:

func audioPlayerDidFinishPlaying(_ player: AVAudioPlayer, successfully

flag: Bool) {

}

func audioPlayerDecodeErrorDidOccur(_ player: AVAudioPlayer,

error: Error?) {

}

func audioPlayerBeginInterruption(_ player: AVAudioPlayer) {

}

func audioPlayerEndInterruption(player: AVAudioPlayer) {

}Code language: Swift (swift)For this tutorial, it is not necessary to implement any code for these methods, and they are provided solely for completeness.

Building and Running the App

Once all the requisite changes have been made and saved, test the app in the iOS simulator or a physical device by clicking on the run button in the Xcode toolbar. Once the app appears, click on the Play button to begin playback. Next, adjust the volume using the slider and stop playback using the Stop button. If the playback is not audible on the device, ensure that the switch on the side of the device is not set to silent mode.

Summary

The AVAudioPlayer class, part of the AVFoundation framework, provides a simple way to play audio from within iOS apps. In addition to playing back audio, the class also provides several methods that can be used to control the playback in terms of starting, stopping, and changing the playback volume. By implementing the methods defined by the AVAudioPlayerDelegate protocol, the app may also be configured to receive notifications of events related to the playback, such as playback ending or an error occurring during the audio decoding process.