In addition to audio playback, the iOS AV Foundation Framework provides the ability to record sound on iOS using the AVAudioRecorder class. This chapter will work step-by-step through a tutorial demonstrating using the AVAudioRecorder class to record audio.

An Overview of the AVAudioRecorder Tutorial

This chapter aims to create an iOS app to record and play audio. It will do so by creating an instance of the AVAudioRecorder class and configuring it with a file to contain the audio and a range of settings dictating the quality and format of the audio. Finally, playback of the recorded audio file will be performed using the AVAudioPlayer class, which was covered in detail in the chapter entitled Playing Audio on iOS 17 using AVAudioPlayer.

Audio recording and playback will be controlled by buttons in the user interface that are connected to action methods which, in turn, will make appropriate calls to the instance methods of the AVAudioRecorder and AVAudioPlayer objects, respectively.

The view controller of the example app will also implement the AVAudioRecorderDelegate and AVAudioPlayerDelegate protocols and several corresponding delegate methods to receive notification of events relating to playback and recording.

Creating the Recorder Project

Begin by launching Xcode and creating a new single view-based app named Record using the Swift programming language.

Configuring the Microphone Usage Description

Access to the microphone from within an iOS app is considered a potential risk to the user’s privacy. Therefore, when an app attempts to access the microphone, the operating system will display a warning dialog to the user seeking authorization for the app to proceed. Included within the content of this dialog is a message from the app justifying using the microphone. This text message must be specified within the Info.plist file using the NSMicrophoneUsageDescription key. The absence of this key will result in the app crashing at runtime.

To add this setting:

- Select the Record entry at the top of the Project navigator panel and select the Info tab in the main panel.

- Click on the + button contained with the last line of properties in the Custom iOS Target Properties section.

- Select the Privacy – Microphone Usage Description item from the resulting menu.

Once the key has been added, double-click in the corresponding value column and enter the following text:

The audio recorded by this app is stored securely and is not shared.Code language: plaintext (plaintext)Once the rest of the code has been added and the app is launched for the first time, a dialog will appear, including the usage message. If the user taps the OK button, microphone access will be granted to the app.

Designing the User Interface

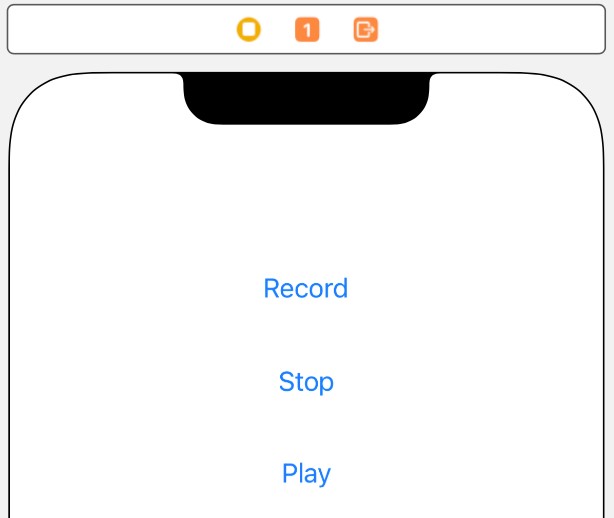

Select the Main.storyboard file and, once loaded, drag Button objects from the Library (View -> Utilities -> Show Library) and position them on the View window. Once placed in the view, modify the text on each button so that the user interface appears as illustrated in Figure 89-1:

With the scene view selected within the storyboard canvas, display the Auto Layout Resolve Auto Layout Issues menu and select the Reset to Suggested Constraints menu option listed in the All Views in View Controller section of the menu.

Select the “Record” button object in the view canvas, display the Assistant Editor panel and verify that the editor is displaying the contents of the ViewController.swift file. Next, right-click on the Record button object and drag to a position just below the class declaration line in the Assistant Editor. Release the line and establish an outlet connection named recordButton. Repeat these steps to establish outlet connections for the “Play” and “Stop” buttons named playButton and stopButton, respectively.

Continuing to use the Assistant Editor, establish Action connections from the three buttons to methods named recordAudio, playAudio, and stopAudio.

Close the Assistant Editor panel, select the ViewController.swift file and modify it to import the AVFoundation framework, declare adherence to some delegate protocols, and add properties to store references to AVAudioRecorder and AVAudioPlayer instances:

import UIKit

import AVFoundation

class ViewController: UIViewController, AVAudioPlayerDelegate, AVAudioRecorderDelegate {

var audioPlayer: AVAudioPlayer?

var audioRecorder: AVAudioRecorder?

.

.Code language: Swift (swift)Creating the AVAudioRecorder Instance

When the app is first launched, an instance of the AVAudioRecorder class needs to be created. This will be initialized with the URL of a file into which the recorded audio will be saved. Also passed as an argument to the initialization method is a Dictionary object indicating the settings for the recording, such as bit rate, sample rate, and audio quality. A full description of the settings available may be found in the appropriate Apple iOS reference materials.

As is often the case, a good location to initialize the AVAudioRecorder instance is within a method to be called from the viewDidLoad method of the view controller located in the ViewController.swift file. Select the file in the project navigator and modify it so that it reads as follows:

.

.

override func viewDidLoad() {

super.viewDidLoad()

audioInit()

}

func audioInit() {

playButton.isEnabled = false

stopButton.isEnabled = false

let fileMgr = FileManager.default

let dirPaths = fileMgr.urls(for: .documentDirectory,

in: .userDomainMask)

let soundFileURL = dirPaths[0].appendingPathComponent("sound.caf")

let recordSettings =

[AVEncoderAudioQualityKey: AVAudioQuality.min.rawValue,

AVEncoderBitRateKey: 16,

AVNumberOfChannelsKey: 2,

AVSampleRateKey: 44100.0] as [String : Any]

let audioSession = AVAudioSession.sharedInstance()

do {

try audioSession.setCategory(

AVAudioSession.Category.playAndRecord, mode: .default)

} catch let error as NSError {

print("audioSession error: \(error.localizedDescription)")

}

do {

try audioRecorder = AVAudioRecorder(url: soundFileURL,

settings: recordSettings as [String : AnyObject])

audioRecorder?.prepareToRecord()

} catch let error as NSError {

print("audioSession error: \(error.localizedDescription)")

}

}

.

.Code language: Swift (swift)Since no audio has been recorded, the above method disables the play and stop buttons. It then identifies the app’s documents directory and constructs a URL to a file in that location named sound.caf. A Dictionary object is then created containing the recording quality settings before an audio session, and an instance of the AVAudioRecorder class is created. Finally, assuming no errors are encountered, the audioRecorder instance is prepared to begin recording when requested to do so by the user.

Implementing the Action Methods

The next step is implementing the action methods connected to the three button objects. Select the ViewController. swift file and modify it as outlined in the following code excerpt:

@IBAction func recordAudio(_ sender: Any) {

if audioRecorder?.isRecording == false {

playButton.isEnabled = false

stopButton.isEnabled = true

audioRecorder?.record()

}

}

@IBAction func stopAudio(_ sender: Any) {

stopButton.isEnabled = false

playButton.isEnabled = true

recordButton.isEnabled = true

if audioRecorder?.isRecording == true {

audioRecorder?.stop()

} else {

audioPlayer?.stop()

}

}

@IBAction func playAudio(_ sender: Any) {

if audioRecorder?.isRecording == false {

stopButton.isEnabled = true

recordButton.isEnabled = false

do {

try audioPlayer = AVAudioPlayer(contentsOf:

(audioRecorder?.url)!)

audioPlayer!.delegate = self

audioPlayer!.prepareToPlay()

audioPlayer!.play()

} catch let error as NSError {

print("audioPlayer error: \(error.localizedDescription)")

}

}

}Code language: Swift (swift)Each of the above methods performs the steps necessary to enable and disable appropriate buttons in the user interface and to interact with the AVAudioRecorder and AVAudioPlayer object instances to record or playback audio.

Implementing the Delegate Methods

To receive notification about the success or otherwise of recording or playback, it is necessary to implement some delegate methods. For this tutorial, we will need to implement the methods to indicate errors have occurred and also when playback is finished. Once again, edit the ViewController.swift file and add these methods as follows:

func audioPlayerDidFinishPlaying(_ player: AVAudioPlayer,

successfully flag: Bool) {

recordButton.isEnabled = true

stopButton.isEnabled = false

}

func audioPlayerDecodeErrorDidOccur(_ player: AVAudioPlayer, error: Error?) {

print("Audio Play Decode Error")

}

func audioRecorderDidFinishRecording(_ recorder: AVAudioRecorder, successfully flag: Bool) {

}

func audioRecorderEncodeErrorDidOccur(_ recorder: AVAudioRecorder, error: Error?) {

print("Audio Record Encode Error")

}Code language: Swift (swift)Testing the App

Configure Xcode to install the app on a device or simulator session and build and run the app by clicking on the run button in the main toolbar. Once loaded onto the device, the operating system will seek permission to allow the app to access the microphone. Allow access and touch the Record button to record some sound. Touch the Stop button when the recording is completed and use the Play button to play back the audio.

Summary

This chapter has provided an overview and example of using the AVAudioRecorder and AVAudioPlayer classes of the AVFoundation framework to record and playback audio from within an iOS app. The chapter also outlined the necessity of configuring the microphone usage privacy key-value pair within the Info.plist file to obtain microphone access permission from the user.